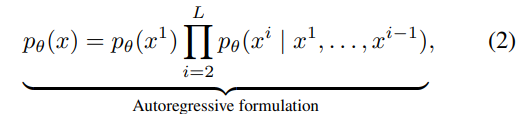

https://arxiv.org/abs/2307.06945 In-context Autoencoder for Context Compression in a Large Language ModelWe propose the In-context Autoencoder (ICAE), leveraging the power of a large language model (LLM) to compress a long context into short compact memory slots that can be directly conditioned on by the LLM for various purposes. ICAE is first pretrained usinarxiv.org긴 컨텍스트를 이겨내기 위해 다양한 접근 방법이 있..